In the era of data-driven decision-making, mastering the intricacies of data processing is indispensable for aspiring data scientists and seasoned professionals alike. This project delves deep into the construction and automation of a robust ETL (Extract, Transform, Load) pipeline. From the meticulous setup of a Docker environment ensuring seamless deployment, to the strategic utilization of APIs, and the seamless automation of workflows through GitHub Actions, this post documents my journey in building an Anime Insights Dashboard powered by an Automated ETL pipeline.

Setting up a Docker Environment

Our project starts with Docker, a containerization platform that ensures consistency across different environments. I began by creating a Dockerfile, specifying the project’s dependencies in a requirements.txt file. After building the Docker image, it was pushed to my personal Docker Hub repository. This step ensures that the pipeline runs consistently, both locally and within GitHub Actions, setting the stage for the next processes.

# Use an appropriate base image, such as Python.

FROM python:3.11-slim

# Add git

RUN apt-get -y update

RUN apt-get -y install git

# Set the working directory inside the container.

WORKDIR /data_engineering

# Copy your requirements.txt file to the container.

COPY requirements.txt .

# Install the packages listed in requirements.txt, clearing the cache.

RUN pip install --no-cache-dir -r requirements.txtSetting up a Rapid API Account

Rapid API, a hub for various APIs, became our gateway to real-time data. Specifically, I tapped into the MyAnimeList API, obtaining access to valuable information about top airing anime. Authentication was handled through an API key, securely stored as a JSON file in the secrets folder of local development environment. The free tier of this API gives me 300 requests per month and some rate limiters, which are still more than sufficient for this project.

import requests

url = "https://myanimelist.p.rapidapi.com/anime/<myanimelist_id>"

headers = {

"X-RapidAPI-Key": "rapid-api-key",

"X-RapidAPI-Host": "myanimelist.p.rapidapi.com"

}

response = requests.get(url, headers=headers)

print(response.json())0:

title:"Bleach: Sennen Kessen-hen"

picture_url:"https://cdn.myanimelist.net/r/50x70/images/anime/1764/126627.jpg?s=196ea098ac1becd97fc0a4b50a949c2b"

myanimelist_url:"https://myanimelist.net/anime/41467/Bleach__Sennen_Kessen-hen"

myanimelist_id:41467

rank:1

score:9.1

type:"TV (13 eps)"

aired_on:"Oct 2022 - Dec 2022"

members:317644

1:

title:"Bocchi the Rock!"

picture_url:"https://cdn.myanimelist.net/r/50x70/images/anime/1448/127956.jpg?s=81e0f14a54c27c6d8046fced1a046f28"

myanimelist_url:"https://myanimelist.net/anime/47917/Bocchi_the_Rock"

myanimelist_id:47917

rank:2

score:8.86

type:"TV (12 eps)"

aired_on:"Oct 2022 - Dec 2022"

members:158662Using BigQuery API in Python

Interacting with Google BigQuery necessitated the creation of a service account. This will also generate credentials as a JSON file. These credentials were stored and loaded dynamically, ensuring that the keys are not comitted to the repository. I have added a 'date_pulled' column, appended to the data fetched from the top airing anime endpoint. This column is used to prevent duplicate uploads and minimize unnecessary API calls.

Getting the top 50 airing anime for the day only takes 1 API call. Getting the details for each anime however, takes 1 API call for each. I use BigQuery to look for all myanimelist_id stored in my top airing anime table that doesn’t have a corresponding row yet in my anime details table. Except for the start of a new season, only a few anime will enter and exit this list so the API request for anime details will be lessened as the season progresses.

Data Processing using Pandas

Pandas, a Python library for data processing, is used to transform and clean the JSON response of the API. Nested fields were flattened, and values in the “list of dictionaries” format were simplified just be a comma separated string of names. Sometimes, an anime ID will not yet have details available so the API will return an error. These rows will not be added to our data. To simulate a data store, processed data was saved as Parquet files. Then a different function reads these Parquet files and uploads them to BigQuery.

Creating GitHub Actions

GitHub Actions, a solution to run your code on a cloud-based environment, was employed to orchestrate the ETL pipeline. I modularized the process into functions, allowing for distinct workflows for daily and weekly tasks. YAML files were crafted, defining these workflows as cron jobs.

- The script will launch a container using my specified Docker image.

- Then it will checkout the code from the repository.

- Next it will decrypt my API keys that are not committed.

- It will then run the python scripts to perform the process discussed above.

- Finally, it will write a log file and push it to the repository.

This automation not only saved time but also ensured the reliability of the entire process.

API Keys and Secrets

Securing sensitive information was a priority. Since the code requires API keys which I cannot just hard code into the script, I needed another way for the Actions environment to access them. I used 64-bit encryption to encrypt the JSON files, and then added them as secrets to the repository. GitHub Actions then decrypts them in a secrets folder similar to my local set up.

- name: Decode Base64

run: |

mkdir -p secrets

current_directory=$(pwd)

echo "Current working directory is: $current_directory"

echo "${{ secrets.API_HEADERS }}" | base64 --decode > secrets/headers.json

echo "${{ secrets.GOOGLEAPI_KEYS }}" | base64 --decode > secrets/google_api_keys.jsonLogging

Logging often overlooked but invaluable for clarity and traceability. Print statements were replaced with logging statements, capturing every step of the ETL process. I dynamically create a new log file for each day and I use Github Actions to commit and push these log files to the repository.

- name: commit files

run: |

git config --global --add safe.directory /__w/data_engineering_learning/data_engineering_learning

git config --global user.email "action@github.com"

git config --global user.name "GitHub Action"

git add -A

git diff-index --quiet HEAD || (git commit -a -m "updated logs" --allow-empty)

- name: push changes

uses: ad-m/github-push-action@v0.6.0

with:

github_token: ${{ secrets.GITHUB_TOKEN }}

branch: mainConclusion

In this project, we built an Automated ETL Pipeline. From Docker setup to leveraging APIs and implementing automation with GitHub Actions, to finally loading the data into Big Query. The project doesn’t end here; in part two, we’ll explore the collected data, automate queries, create an updating dashboard.

Stay tuned for more!

Mastering Pipelines: Integrating Feature Engineering into Your Predictive Models

Master predictive modeling with Scikit-Learn pipelines. Learn the importance of feature engineering and how to prevent data leakage.

Unlocking Data Science: Your Easy Docker Setup Guide

Ready to dive into data science? Learn how to set up your development environment using Docker for a seamless and reproducible workspace. Say goodbye to compatibility issues and hello to data science success!

In this short project, I scraped all of Lebron's regular season points and plotted them in an interactive graph.

Predicting a Fitness Center’s Class Attendance with Machine Learning

In this project I analyzed a fitness center's attendance data to predict attendance rates of its group classes.

A marketing agency presents a promotional plan to a telecommunication company to increase its subscriber base and generate revenue. Using population and location data, we estimated the feasibility of this plan in this case study.

The tweet that started the trend was posted on June 12 (Philippine Independence day). On that tweet, the author stated that he dined in at a Tropical Hut branch, and he is their only customer. Despite the pandemic “restrictions”, commercial activity on malls and fast food chains are pretty much back to “normal”, so having only one customer is disheartening.

In this project I trained a transformer model to recognize words from audio.

In this article, I fine-tuned a pre-trained object detection model using a small custom dataset.

Fast and Free Deep Learning Demo with Gradio

In this article, we will build a simple deep learning based demo application that can be accessed publicly in Hugging Face spaces

The rate at which unreliable news was spread online in the recent years was unprecedented. In this project, I finetuned some language models to make an unreliable news classifier.

In this article, we will discuss how to quickly showcase your model publicly as a machine learning application for free.

In this article, I will discuss a way of accessing Google Cloud GPUs to train your Deep Learning projects.

Code This case study is my capstone project for the Google Data Analytics Certification offered through Coursera. I was given access to …

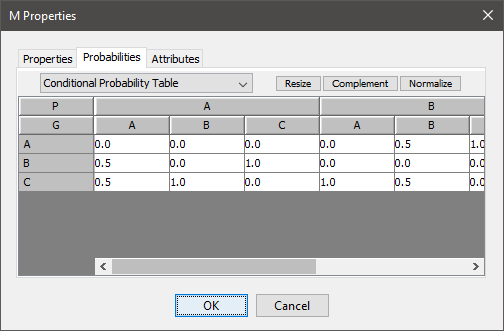

However, to make it a little bit more scalable, the tables are defined in a separate Google sheet, and imported into the Google Colab notebook. Each node is a separate worksheet, and the columns list the parent nodes, the name of the node, and probability.

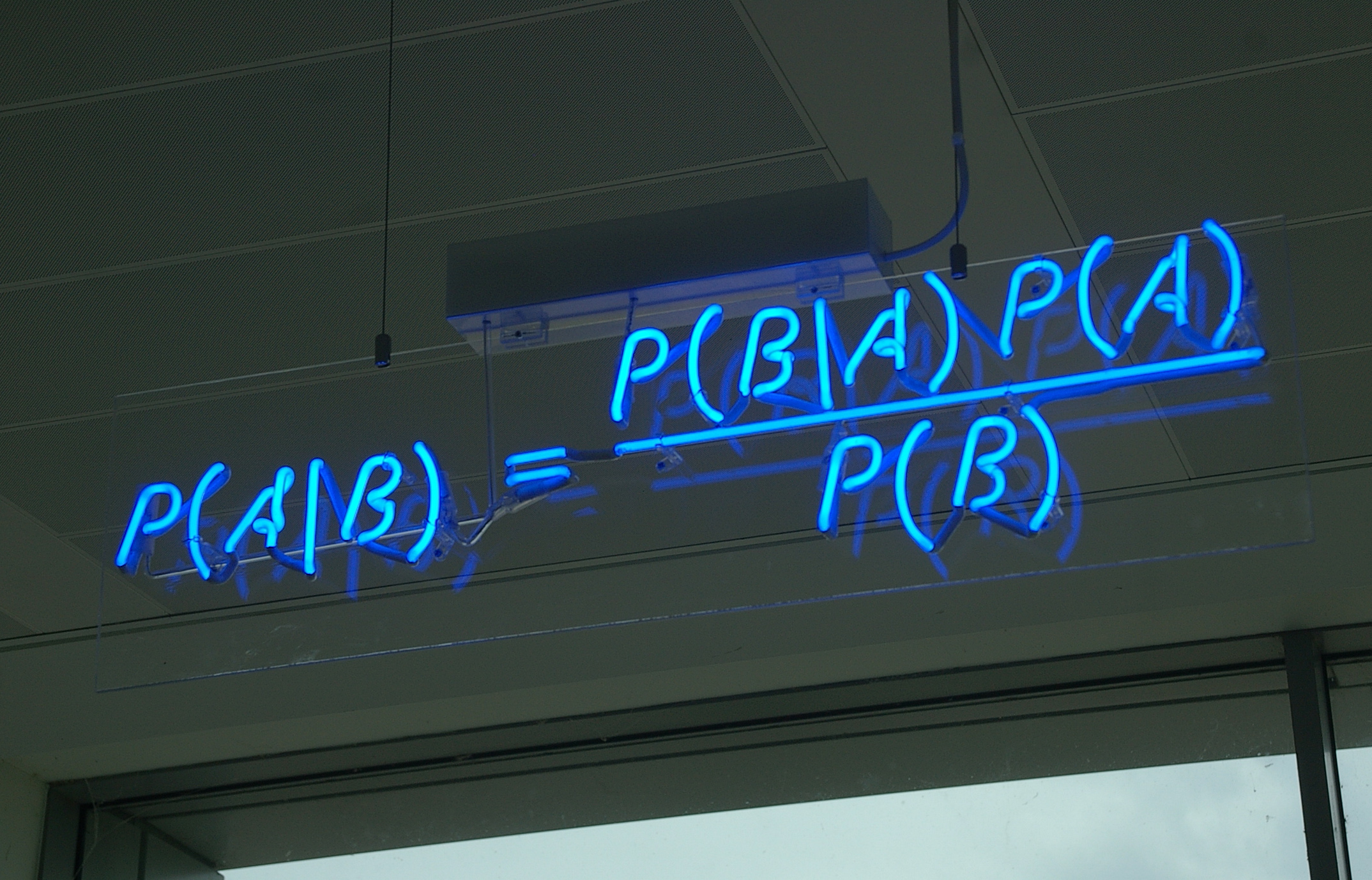

Bayesian Networks are a compact graphical representation of how random variables depend on each other. It can be used to demonstrate the …

In this article we will break down what the Fourier Transform does to a signal, then we will be using Python to compute and visualize the transforms of different waveforms.